Chiranjeevi Maddala

April 3, 2026

Watch any team build an AI agent for the first time and you will notice a pattern. The first hour is productive — tools get connected, APIs get wired up, the orchestration framework gets chosen. The team is moving fast. Then comes the second hour. And the third. And something quietly shifts.

The agent starts doing unexpected things. It loops on a task it should have completed. It calls a tool five times when once was enough. It produces an answer that is technically correct but entirely beside the point. It freezes at an edge case nobody anticipated. The debugging begins — and it goes nowhere obvious, because there is no bug. The code is fine. The model is capable. The tools work.

The problem is something earlier and deeper: nobody designed how the agent should think.

This is not a technology problem. It is a design problem. And it is the most common failure mode in enterprise AI agent development today — not model limitations, not tool failures, but the absence of a structured approach to the cognitive architecture of the agent before a single line of code is written.

Most agent failures are not technical. They are definitional.

What the field needs — and currently lacks — is a shared vocabulary and a structured method for this work. A mental model for thinking about how an agent should reason, not just what it should do. I am proposing one. I am calling it Agentic Thinking.

What Computational Thinking Taught Us

In 2006, computer scientist Jeannette Wing published a short essay arguing that computational thinking — the ability to decompose problems, recognise patterns, build abstractions, and design algorithms — was a foundational cognitive skill for the modern age, not just for programmers. Her core insight was simple and powerful: thinking like a computer is itself a learnable, transferable discipline.

The impact of naming that practice was significant. Once computational thinking had a name, it could be taught, measured, debated, and applied across domains. A biologist could use it. A city planner could use it. A teacher could build a curriculum around it. The name created the scaffold for a discipline.

We are at an equivalent inflection point today. The machine has changed — it is no longer a deterministic processor of instructions, but an autonomous reasoner operating in open-ended environments, using judgment at every step. Building one well requires a fundamentally different kind of thinking. Not computational thinking. Agentic thinking.

What Is Agentic Thinking?

Agentic Thinking is the discipline of designing how an AI agent should reason, act, and adapt — before writing a line of code.

It is a cognitive skill, not a technical one. A practitioner exercising Agentic Thinking is asking a fundamentally different class of questions than a developer building an agent. Not "which framework should I use?" or "how do I connect this API?" — but: What is the agent's actual task, and how should it be broken into tractable steps? What context is essential for good decisions, and what is noise? What signals should trigger which reasoning paths? In what order should tools be sequenced? Where should the agent stop, escalate, or refuse? What should happen when the agent encounters something it was not designed for?

These are design questions. They require deliberate thought, not configuration. And they need to be answered before the code is written — because the answers shape everything that follows.

The Six Dimensions of Agentic Thinking

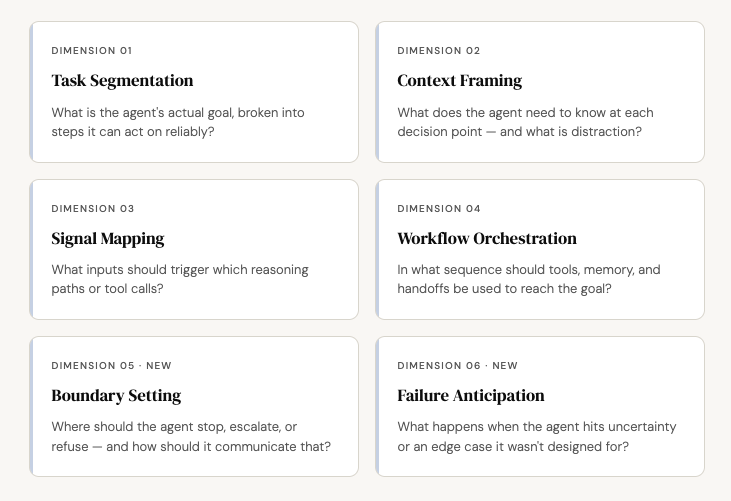

Agentic Thinking is not a single skill. It is a practice composed of six distinct cognitive dimensions. Some map onto Computational Thinking; two are entirely new — unique to the challenge of designing autonomous agents that act with real-world consequences.

1. Task Segmentation

Most agent failures begin here. The agent is given a goal that sounds clear — "research this competitor," "resolve this customer issue," "review this pull request" — but has never been decomposed into steps the agent can act on with precision. Task Segmentation is the practice of breaking the agent's purpose into discrete, executable units, each with a clear input, a clear success condition, and a clear output. The discipline is in the specificity: not "research this topic" but "identify five primary sources, extract the main argument from each, and flag any contradictions." Vague goals produce unpredictable agents. Precise task definitions produce consistent ones.

2. Context Framing

An agent with too little context guesses. An agent with too much context loses the thread. Context Framing is the practice of designing what the agent knows at each decision point — and deliberately excluding everything else. This means crafting the system prompt with surgical precision, managing what gets retrieved from memory and when, and structuring tool outputs so that what the agent receives is always signal rather than noise. Context Framing is why two agents with identical tools can produce wildly different results: one was designed to know the right things at the right moment; the other was not.

3. Signal Mapping

At every step, an agent faces routing decisions: call a tool or ask a clarifying question? Produce output or loop again? Escalate or proceed? Signal Mapping is the practice of designing these decision points explicitly — identifying the specific inputs, thresholds, keywords, or data shapes that should trigger each path. Without it, agents improvise. And improvisation at scale, in production, is expensive. Signal Mapping also connects directly to how Anthropic's Agent Skills architecture works: Claude decides when to invoke a Skill based on relevance — and Signal Mapping is precisely the cognitive design work that makes that judgment reliable rather than accidental.

4. Workflow Orchestration

This is the closest Agentic Thinking dimension to classical algorithm design: sequencing tools, memory reads and writes, sub-agent handoffs, and output generation into a coherent, goal-directed workflow. The question is not "what tools does the agent have?" but "in what order, under what conditions, should it use them?" As agent capability layers grow richer — Anthropic's Agent Skills ecosystem already includes partner-built Skills from Atlassian, Figma, Canva, and Notion — Orchestration becomes the practitioner's most demanding task. More capability means more possible sequences. Orchestration is the design work that turns a library of Skills into a working agent rather than a cabinet of unused tools.

5. Boundary Setting

This dimension has no equivalent in Computational Thinking, because classical computation has no concept of refusal. Agents do — and the cost of getting boundaries wrong is high. Boundary Setting means defining, before deployment, what the agent should not attempt: tasks outside its competence, actions with irreversible consequences, requests that require human judgment. An agent without well-defined boundaries will eventually act with confidence in precisely the places it should not. The discipline here is as much about communication as restriction: a well-bounded agent does not just stop — it explains why, routes to the right resource, and escalates with clarity rather than silence.

6. Failure Anticipation

Real-world agents encounter ambiguity, contradictory data, tool failures, and requests they were never designed for. Failure Anticipation is the practice of designing the agent's behaviour under uncertainty before deployment — not discovering it in production after something has gone wrong. It asks: when the agent does not know, what does it do? When a tool returns an unexpected error, how does the agent respond? When the task is genuinely ambiguous, does the agent guess, ask, or halt? The answers to these questions are not defaults to be accepted but decisions to be made, deliberately, as part of the design process.

A Note on Anthropic's Agent Skills

In October 2025, Anthropic launched Agent Skills — defined as "organised folders of instructions, scripts, and resources that agents can discover and load dynamically to perform better at specific tasks." Released as an open standard in December 2025, Agent Skills provides the capability layer of the modern agentic stack.

The relationship with Agentic Thinking is complementary and precise. Skills define what the agent can do. Agentic Thinking defines how the agent decides.

Skills are the vocabulary — the named, composable units of capability an agent can invoke. Agentic Thinking is the grammar — the cognitive design that governs when a skill gets invoked, in what sequence, under what conditions, and with what stopping criteria. A practitioner who equips an agent with excellent Skills but applies no Agentic Thinking has a well-stocked toolbox and no blueprint. The agent will act. It just will not act well.

How to Apply Agentic Thinking: A Starting Practice

Agentic Thinking is a design discipline, not a checklist. But for practitioners encountering it for the first time, a structured starting practice helps build the habit. Here is a five-step approach to apply before your next agent build.

1. Write the agent's goal in one sentence — then rewrite it three times.Each rewrite should be more specific than the last. The final version should be precise enough that a colleague with no prior context could execute it reliably without a single clarifying question. If you cannot reach that precision in three rewrites, the goal is not yet agent-ready. Do Task Segmentation first.

2. Map the decision points before you touch the tools.List every moment in the workflow where the agent will need to choose between two or more paths. For each decision point, write down what input drives the choice and what each path leads to. This is Signal Mapping — and it reveals the routing logic that most teams leave implicit until something breaks in production.

3. Design the context budget for each step.For each step in the workflow, write down exactly what the agent needs to know — and nothing more. Treat context as a budget, not a dump. If you find yourself writing "the agent should have access to all previous messages," pause and ask: which messages, specifically, matter for this decision?

4. Write the refusal cases before the happy path.Before designing what the agent does when everything goes right, design what it does when things go wrong. List five scenarios the agent was not designed for. For each, decide: does it stop, escalate, ask, or attempt? Writing the refusal cases first forces a boundary clarity that happy-path design consistently obscures.

5. Run a pre-mortem, not just a post-mortem.Before deploying, ask your team: imagine this agent has failed in production in three distinct ways — what were those failures? Work backwards from each failure to the design gap that caused it. This surfaces edge cases that testing alone rarely finds, because testing tends to probe the paths you already imagined.

Why This Needs a Name

Naming a practice changes how people approach it. Before computational thinking had a name, practitioners were decomposing problems and designing algorithms — but they could not teach it systematically, build on each other's instincts, or transfer it deliberately across domains. The name created the shared vocabulary that made the discipline possible.

Agentic Thinking is already happening. Experienced agent builders are already doing task segmentation, boundary setting, and failure anticipation — but informally, intuitively, often without recognising that these are distinct skills that can be deliberately practised and improved. The result: every team reinvents the same hard-won lessons independently. The practice does not compound across the field.

The name does not create the practice. It makes the practice visible enough to be taught, shared, and built upon.

As Anthropic's Agent Skills standard drives rapid ecosystem growth — more packaged capabilities, more partner Skills, more agentic surface area across enterprise tools — the cognitive complexity of agent design will only increase. More capability means more decisions about what to invoke, when, and in what sequence. Agentic Thinking is how practitioners stay ahead of that complexity — not by limiting what agents can do, but by designing, deliberately, how they decide.

The bottleneck in agent development today is not the model. It is not the tools. It is the thinking that happens — or doesn't happen — before any of it is built.