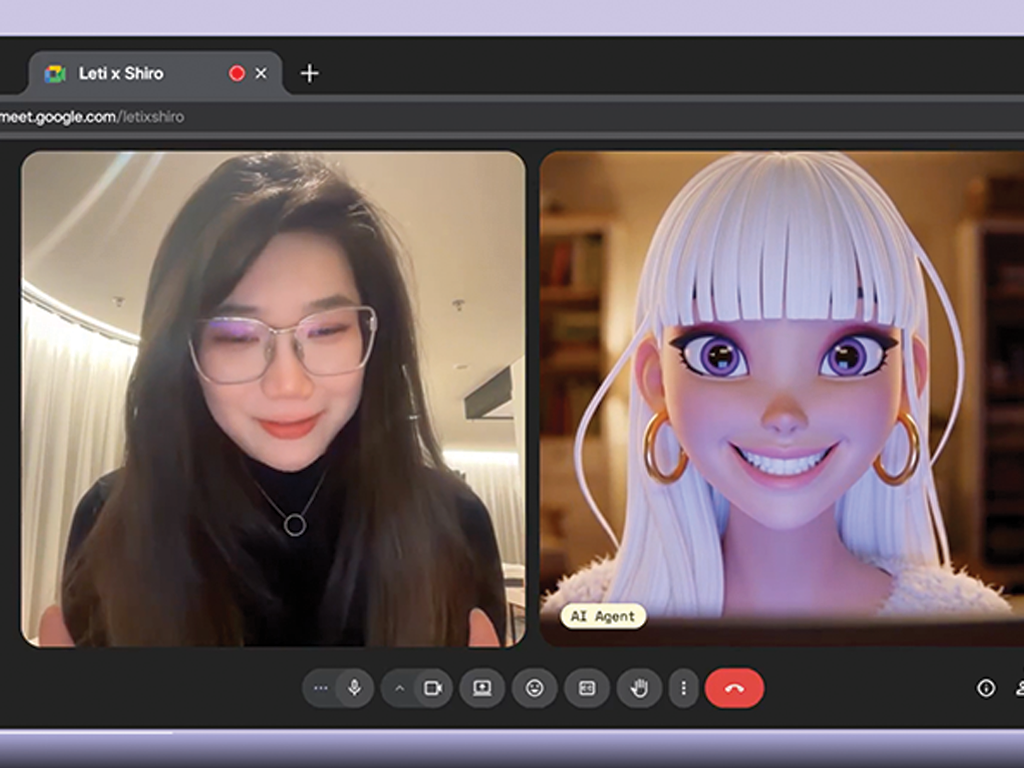

Pika launches PikaStream 1.0 — real-time video chat for AI agents

AI agents can now join video calls with a face, a voice, and the ability to act. Pika Labs' new model enables live face-to-face interaction with persistent memory, personality, and in-call task execution — a shift in how humans and agents collaborate.

What was announced

Pika Labs has released a beta video chat skill for AI agents, powered by their new real-time model PikaStream 1.0. The skill can be plugged into any AI agent — not just Pika's own "AI Self" product — via an open-source GitHub repository. Developers can access it through the Pika Developer API, with pricing at $0.50 per minute of call time.

Unlike previous AI video systems that required longer render times, PikaStream 1.0 streams at 24 frames per second with approximately 1.5 seconds of latency on a single H100 GPU. The model is designed for identity-consistent talking avatars — agents that retain appearance, personality, and memory across a live conversation.

"Conversations tend to go better with a face and a voice. The skill preserves memory and personality, and enables real-time adaptability. And if you use it with your Pika AI Self, they'll be able to execute agentic tasks during the call." — Pika Labs

Key capabilities

Persistent context across the call — The agent retains knowledge of workspace context, recent activity, and known contacts throughout the entire conversation, not just a single exchange.

Agents that act, not just talk — Agents can execute tasks like booking meetings or drafting reports while the video call is actively happening, without stepping out of the conversation.

Consistent face and personality — The avatar responds with emotionally appropriate facial expressions in real time. Users can generate a professional headshot or bring their own image as the agent's face.

Plugs into any AI agent — The pikastream-video-meeting skill is open-source on GitHub, integrating with Claude Code, OpenClaw, Hermes, and other agent frameworks.

Key numbers

24fps — streaming frame rate on a single H100 GPU

~1.5 seconds — latency, a clear improvement over prior AI video systems

Any agent — works with Claude, OpenClaw, or any agent via the open-source skill

$0.50 per minute — pricing via the Pika Developer API

Current limitations

The skill is in beta — demos show visible glitches in some outputs. Google Meet is the only supported platform at launch; Zoom and FaceTime support is on the roadmap. The skill requires a Pika Developer Key, Python 3.10+, and a credits balance before joining any call.

Why this matters for AI Ready School?

AI tutors and learning companions may soon look you in the eye. For Cypher-style personal learning companions, face-to-face AI interaction could dramatically deepen engagement — especially for younger students who respond to social cues.

The "agentic tasks during the call" feature is a direct signal that AI collaboration is moving from text to presence. Schools teaching agentic thinking must now prepare children for working with agents that can see, speak, and act simultaneously.

Teacher-facing tools like Morpheus could evolve toward conversational interfaces — an AI colleague visible on a call, co-planning lessons and reviewing student data in real time, rather than through a dashboard alone.

The open-skill architecture is significant. Pika has shown that agentic video presence can be modular — schools and ed-tech developers can build on top, creating domain-specific learning avatars tied to curriculum or student profiles.

Children growing up today will interact with video-capable AI as a default. Human First, AI Next as a philosophy must evolve to address what healthy, bounded interaction with a face-and-voice AI looks like in a classroom context.